Generated AI image by Microsoft Bing Image Creator

It’s been some months since 2021 has begun (and we’re not where near safe coming out of the pandemic woods just yet ). I’ve been itching to come and sit down to write my first blog post for the year.

What better way to start out writing ins-and-outs on certain Python web frameworks.

I’ve been working on Python web projects for some time and I’m here to offer my rants/thoughts when working between Django and Flask/Falcon and outline the comparisons between these two.

Let’s start with the ones I’m most familiar with.

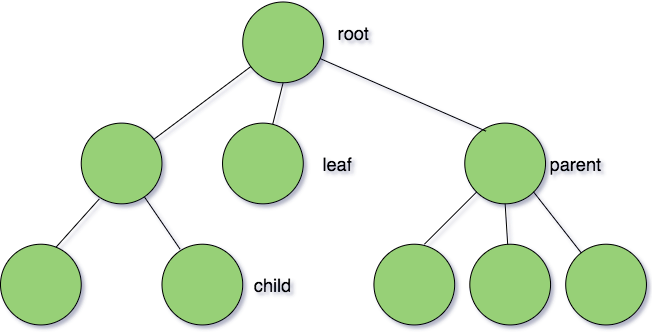

NB - Before I begin, this article assumes the reader either has a good understanding or good working knowledge of MVC software patterns as more web frameworks are built around this pattern. If you don’t know what that is, you may want to visit this Wikipedia page as a refresher course, before proceed reading.

Flask/Falcon

First of the rank is Flask. 🌶

Flask’s software design philosophy is to help developers to focus on code design simplicity first. What it means by that is it’s a framework that does not come with a lot of ‘bells and whistle’ you need in building the web app such as scaling web server, incorporating templating engine language, authentication system, middleware libraries, service logic and database layers.

You get to handpick a number of these yourselves to decide how your app should take form.

From project experience, I used a number of well-known libraries that are popular amongst the Python developer community. They include things like:

- SQLAlchemy - popular ORM for Python

- Alembic - database migration engine written Python

- Gunicorn - a popular web server for running Python web apps

- Flask-related libraries ie file upload, admin logon etc.

- Jinja templating engine

- Marshmallow - simplified object serialisation/deserialisation libraries

So without further ado, let’s start with its basic layout

Basic Layout

from flask import Flaskapp = Flask(__name__)app.route('/')def hello_world(): return 'Hello, World!'

We start with importing the Flask package and made its object instantiation to kick off the application.

With app, you can start to create route system to have a home landing page to render the home page’s content page by making using of its route decorator functions.

Folder structure

app.pyconfig.pyrequirements.txtstatictemplates

In the above, we have its basic folder structure that comes with minimalists set of files. Usually, it comes with the base app file, the config.py setup file, the requirements.txt for managing app dependencies along with static and templates folders, where static contains all the front end libraries like JQuery/JS, CSS and one HTML file. While templates containing all of your Jinja templating files.

View Template

from flask import render_templateapp.route('/hello/')def hello(name=None): return render_template('hello.html', name=name)

On the V-side of MVC pattern, we use importrender_template function and using the same function, we make the function call to render particular a JINJA template file (which normally resides at root of the application folder by default), based on the current url route where we viewing the template from.

#####Database models

class User(db.Model): id = db.Column(db.Integer, primary_key=True) username = db.Column(db.String(80), unique=True, nullable=False) email = db.Column(db.String(120), unique=True, nullable=False) def __repr__(self): return '<User %r>' % self.username

On the M-side of MVC pattern, we use the more popular ORM library Flask-SQLAlchemy ()which is a wrapper on top of SQLAlchemy) that assists in define our entity models, along with using datatypes for our model’s attributes which are easy to understand and follow.

Routes

app.route('/')def index(): return 'Index Page'@app.route('/hello')def hello(): return 'Hello, World'

On the C-side of MVC pattern, we use route decorators to tell Flask which url segments will be rerouted to the appropriate controller which are responsible for handling such requests.

Nothing new here.

from flask import Flask, render_template,requestapp = Flask(__name__)@app.route('/send',methods = ['GET','POST'])def send(): if request.method == 'POST': age = request.form['age'] return render_template('age.html',age=age) return render_template('index.html')if __name__ == '__main__':app.run()

When working on the pure SSR(server side rendering) apps, we import our render_template and request combo to tell Flask that we’re going to be dealing with form Jinja Template.

Unit Tests

import osimport tempfileimport pytestfrom flaskr import create_appfrom flaskr import flaskr.app as flaskr_app@pytest.fixturedef client(): db_fd, flaskr_app.config['DATABASE'] = tempfile.mkstemp() flaskr_app.config['TESTING'] = True with flaskr_app.test_client() as client: with flaskr_app.app_context(): flaskr.init_db() yield client os.close(db_fd) os.unlink(flaskr_app.config['DATABASE'])

When doing unit testing for Flask app, we can incorporate some of the popular Python testing frameworks like Unittest or Pytest without much difficulty. Like, in the example above, we have the Python test file here that verifies the application configuration and initialising a new database. Note that everything here is setup a pytest fixture thus we treat this as an individual test module. Then, we can run execute the pytest command and we still get tests results feedback on Flask’s design implementation.

Simple. Not much different to our other types of unit and integration test suite

That’s it for Flask!

Next, we have Falcon. 🦅

Like Flask, Falcon is also a micro framework. But it focuses purely on building REST-ful API-driven applications, therefore thus views and routes concepts are non-existent here. It’s considered a minimalist framework that does not come with plenty of dependencies and a heavy amount of abstractions you have to come to grip with.

NB - You can actually build APIs with Flask as well by the way. Flask, by no means, it’s not built entirely just for SSR apps only. You can download the Flask-RESTful library to achieve the minimal API - you can see the working sample code here

Basic Layout

from wsgiref.simple_server import make_serverimport falconapp = falcon.App()

To start, we make the falcon import to start the app. No much different to Flask’s counterpart.

Folder structure

api├── .venv└── api ├── __init__.py └── app.py

We have the above folder structure. Notice we don’t have any HTML rendering templates to view, compared to Flask.

API Resource Design

class MediaResource: def on_get(self, req, resp): """Handles GET requests""" resp.status = falcon.HTTP_200 resp.content_type = falcon.MEDIA_TEXT resp.text = ('\nTwo things awe me most, the starry sky ' 'above me and the moral law within me.\n' '\n' ' ~ Immanuel Kant\n\n')app = falcon.App()media = MediaResource()app.add_route('/media', media)

Next, we start writing out our resource definition for our APIs.

As part of Falcon’s design philosophy, it adheres to a lot of REST architectural styles, thus they ‘guide you in mapping resources and state manipulations to actual HTTP verbs.

Thus in the above example, we declared our MediaResource that handles any get requests coming in and produces the appropriate responses for them by loading the correct dependencies by its own dependency injector out of the box.

Simple nice and clean!

#####Database models

class User(db.Model): id = db.Column(db.Integer, primary_key=True) username = db.Column(db.String(80), unique=True, nullable=False) email = db.Column(db.String(120), unique=True, nullable=False) def __repr__(self): return '<User %r>' % self.username

Again nothing different here. I personally use SQLAlchemy ORM library and it works well when building your models to support your data-rich RESTful applications.

Unit tests

from falcon import testingimport myappclass MyTestCase(testing.TestCase): def setUp(self): super(MyTestCase, self).setUp() self.app = myapp.create()class TestMyApp(MyTestCase): def test_get_message(self): doc = {'message': 'Hello world!'} result = self.simulate_get('/messages/42') self.assertEqual(result.json, doc)from falcon import testingimport pytestimport myapp@pytest.fixture()def client(): return testing.TestClient(myapp.create())def test_get_message(client): doc = {'message': 'Hello world!'} result = client.simulate_get('/messages/42') assert result.json == doc

When comes to building unit tests for Falcon, it’s relatively straightforward.

You can add a test framework of your choice ie Unittest or Pytest without any difficulty to the framework. You will achieve the same goals as Flask’ unit test configuration counterpart. The only difference between the two is that Falcon comes with many testing utility functions that will better support functional testing in Falcon such as simulate_* method semantics. These are, essentially, to support all of your request/response test cycles across all the common HTTP verbs and actions. just like the client.simulate_get example above.

Now that we got those two out of the way with a firm understanding of how these minimal frameworks are going to work, let’s go and check out the Django side of the fence ⚠️ ⚠️⚠️ ⚠️

Django

Recently worked on Django project, which is a Python fully-fledged MVC framework that has been grazing around the community for a very long time.

To recap, MVC (as stated earlier in this post), is one of the oldest (and most typical) software design patterns any veteran would tell for years they have been using since the Internet era was born 20 years plus ago.

From this design pattern, you would expect to see the Python code written for each layer of componentised design of web applications or software system.

To start, you begin with

django-admin startproject myhomepage

Application Scaffolding - Folder structure

myhomepage/ manage.py myhomepage/ __init__.py settings.py urls.py asgi.py wsgi.py

After running the django admin commands, you will be greeted with the above Django basic folder structure. You start with the container project folder named myhomepage , which is the name you made in the first step. Then, we have manage.py which is the utility Python file that lets you interact with the Django app via the CLI commands.

We see that there’s subfolder name that is named myhomepage again here but this folder will be marked as the Python package that used to contain all the core logic files which encapsulate everything about the Django app itself.

Within this folder, you’re greeted with the following:

__init__.py - an empty file to tell Python that this is considered as a Python package.settings.py - the settings file for the Django Project.urls.py - where you can write up all of your URLs declaration of the Django-powered websiteasgi.py - entry point for setting ASGI webserver to serve the app.wsgi.py - entry point for setting WSGI webserver to serve the app.

To understand the difference between ASGI and WSGI webserver interface you can read more about it here on this post if you’re curious.

Routes/Views/Templates

from django.urls import pathfrom . import viewsurlpatterns = [ path('', views.index, name='index'), path('<int:question_id>/', views.detail, name='detail'), path('<int:question_id>/results/', views.results, name='results'), path('<int:question_id>/vote/', views.vote, name='vote'),]from django.http import HttpResponsefrom django.template import loaderfrom .models import Questiondef index(request): latest_question_list = Question.objects.order_by('-pub_date')[:5] template = loader.get_template('myhomepage/index.html') context = {'latest_question_list': latest_question_list,} output = template.render(context, request) return HttpResponse(output) ........

When comes to building routes and views, Django has this plain concept of views where it is represented as Python function that is responsible for coordinating business logic and expects an actual HTML template that will bind the output of the business logic to render the page. To view the actual rendered page on the browser, Django uses the URL declaration file urls.py where we can place all of our well-defined URL namespace routes for each rendered template output we specified for eg

path('main/', views.index, name='index')

To view the rendered ‘index’ page, we configured the URL path as homepage and then hook it up with our exported views’ index function. Thus in the URL, you would see the content renderded via http://localhost/main/.

With this, we expected to have a big laundry list of views/templates to route and render via the urlpatterns list.

Database models

from django.db import modelsclass Question(models.Model): question_text = models.CharField(max_length=200) pub_date = models.DateTimeField('date published')class Choice(models.Model): question = models.ForeignKey(Question, on_delete=models.CASCADE) choice_text = models.CharField(max_length=200) votes = models.IntegerField(default=0)

When it comes to building up database models under the hood, Django comes bundled with its own ORM framework. Like the SQLAlchemy counterpart, it also comes with the Pythonical way of interacting with relational databases such as creating, querying and manipulating tables inserts and updates. Also, the semantics for describing the db data types are slightly different to the SQLAlchemy as they are bounded to the django.db models having exposed API function calls to make.

When it comes to building APIs, compared to Flask/Falcon, we have the external toolkit package that handles this well. It’s called the djangorestframework. The toolkit is built specifically for handling API-driven architecture systems as Django itself is a fully-fledged MVC framework for lots of server-side driven web applications which are traditionally tightly coupled together.

To start with, you do the following.

Installation

pip install djangorestframework

When creating the app, you do the following

django-admin startproject myappcd myappdjango-admin startapp tutorialcd ..

And you will notice the following app folder structure.

myapp/ db.sqlite3 manage.py tutorial/ migrations __init__.py admin.py apps.py models.py tests.py views.py myapp/ __init__.py asgi.py settings.py urls.py wsgi.py

Again nothing different to the previous Django app setup.

In your settings.py file, you add the following:

INSTALLED_APPS = [.... 'rest_framework',]

Once you’re done with this initial setup, you can begin to outlay your API architecture design look like so below

API Resource Design Basics

from myapp.tutorial.models import Question, Choicefrom rest_framework import serializersclass QuestionSerializer(serializers.HyperlinkedModelSerializer): class Meta: model = Question fields = ['url', 'question_text', 'pub_date']class ChoiceSerializer(serializers.HyperlinkedModelSerializer): class Meta: model = Choice fields = ['url', 'question', 'choice_text', 'votes']from myapp.tutorial.models import Question, Choicefrom rest_framework import viewsetsfrom rest_framework import permissionsfrom myapp.tutorial.serializers import QuestionSerializer, ChoiceSerializerclass QuestionViewSet(viewsets.ModelViewSet): """ API endpoint that allows users to be viewed or edited. """ queryset = Question.objects.all().order_by('pub_date') serializer_class = QuestionSerializer permission_classes = [permissions.IsAuthenticated]class ChoiceViewSet(viewsets.ModelViewSet): """ API endpoint that allows groups to be viewed or edited. """ queryset = Choice.objects.all() serializer_class = ChoiceSerializer permission_classes = [permissions.IsAuthenticated]from django.urls import include, pathfrom rest_framework import routersfrom myapp.tutorial import viewsrouter = routers.DefaultRouter()router.register(r'questions', views.QuestionViewSet)router.register(r'choices', views.ChoiceViewSet)registered_urls = router.urlsurlpatterns = [ path('', include(registered_urls)), path('api-auth/', include('rest_framework.urls', namespace='rest_framework'))]from rest_framework.test import APIRequestFactoryfactory = APIRequestFactory()view = Question.as_view()request = factory.post('/question/', {'title': 'new question'})response = view(request, pk='4')response.render()self.assertEqual(response.content, "some_response_data")

With the above, Django REST introduces few concepts here we have to learn here.

- Serializers

- Viewsets

- Hyperlinked APIs

- Routers

- Unit Testing

In step 1 - Django’s way of serializing JSON response data against models is by injecting one of its own serializer classes, such as HyperlinkedModelSerializer, to the object constructor. This will instruct the class to interpret a number of complex data types such as querysets and model instances. Then we can access Metadata options to bind a number of fields of Model into response output as well as controlling its output structure as well.

In step 2 - when designing out your API resource endpoints that will take care of the logic of your serializable responses from above, we have to understand Viewset which is described simply as a class-based view that responsible for handling all the common HTTP actions/verbs handlers without having you to write your own. Meaning things like get(), post(), put(), patch() and delete() or similar you use to write from Flask/Falcon world are almost ‘non-existent’. Instead, Django gives you list, create, retrieve, update, partial_update and destroy action handlers which, to me, are nothing more like syntactic sugar representation to their traditional counterparts. Viewset is simply an alternate concept to Resources or Controllers domain of API designs.

Interestingly, with this concept, Django offers a few different Viewset classes implementation out of the box for you to design resource API needs. They are:

1 ) GenericViewSet - are the views created as a shortcut for common logic code across similar views by injecting Django action mixins into the constructor thus making you not having to write extra code lines between resources.

- ModelViewSet - are the views created to give you 6 action handlers for read-write operations into one API resource already just like I did examples above for Question and Choice Resource endpoint - with far fewer lines of code other than plumbing queryset, serializer class, and permission middleware. That’s incredibly insane!

One thing that caught my eye is that we have this other concept called Hyperlink APIs where is used to make discoverability of the APIs more meaningful by injecting entities’ URL names instead of its primary keys when describing models relationships. for eg.

http://myapp.tutorial.com/questions/1/choicehttp://myapp.tutorial.com/question/detail-1/choice

In the above, instead of fetching your primary keys from your model instances (say Question model with primary key of 1 as eg) to associate model relationships as part of the URL definition, we can replace it with a different url name as hyperlinks. We can do this by incorporating HyperlinkedIdentityField’s serializer class that represented as view_name field along with a lookup_field that refers to the particular instance of the model object - which in this case it would the primary key of Question table model ie pk or similar .

This is something which Flask/Falcon doesn’t do out of the box.

You can see more example how it is exactly used here -

In step 3 - once we’re happy with our API url endpoints setup, we can begin to configure our routes. In Django, we imported DefaultRouter which is responsible for creating the hyperlinks for you intelligently based on the HyperLinkedModel Serializer setup you defined in the previous steps. Then we register our URL endpoint routes by specifying two arguments; a prefix url name and a Viewset class. On the surface, this looks pretty straightforward. It’s not that much different compared to the Flask/Falcon counterparts - other than the fact Django provides special Viewset classes that can give developers extra wiring options when determining your action handler definitions when mapping out your URL routing rules.

Finally in our step 4 - we look at how the Django handles unit testing as a whole. As we are reusing Django’s existing test framework, Django REST offers extra utility testing tools for testing API request calls specifically by using their factory classes. To create our test requests, we import APIRequestFactory first and then instantiate it as a post request factory . Then to test our response assertions without APIRequestFactory, we need our Viewsets to be treated as views so we can inject our HTTP request factory into the view constructor. That our response can be rendered so that we can perform our assertion on its content straight after.

With that, that’s pretty much it about Django!

Thoughts and Opinions

Having all said and one with the above, I finally come to the part where I offer my opinions between the two.

Based on my personal (as well as professional) bias, I prefer working with microframeworks to major frameworks like Django. To me, a good framework comes with the following:

- Simple code comes with simple design

- Easier and more powerful APIs to grasp

- Simpler and powerful design patterns such IOC./DI you can reason with

- Fewer (and cleaner) abstractions and dependencies for you to learn

- Ease of plugin integration to the web application ecosystem

- Freedom of architectural choice

- Unit/Integration tests are arguably more reliable to test for better accuracy and expectations feedback of the system behaviour.

When reading up design philosophy like this, it totally resonates with me. That’s the type of excellent software design mantra I want to stick by. I feel that I have more ‘’rights’ as a developer to determine how my application can be designed in a certain way. As I mentioned on architectural freedom of choice earlier, microframeworks like Flask/Falcon give us the liberty in making design choices upfront when plumbing away all the components and have them all glued together to make a working product. Involving design choices need to consider all the important factors such as technology choice familiarity, fewer deep-seated dependencies, cleaner abstractions as well as ease of learning barrier for any new developers coming on board when enhancing the product further down the line. With all this considered, I would argue that you can still get away in writing (and achieving) code simplicity in design as the application grow in greater complexity over time.

With major frameworks like Django, RAILS or similar, I don’t have this “freedom”. They give you all these toolsets out of the box, from ORMs, security authentication systems, form generation, resource creation, serialization frameworks etc. They’ve decided the design choices for you already. The things I learned to build from the microframework world cannot be wholly applied here. All its own toolsets concepts like classed-based viewsets, serializers are hard to grasp for newcomers thus I already find that it’s already initial barrier for me when learning to build a simple API endpoint.

One top of that, even if you got the API endpoint working, their abstractions are not straightforward to follow like for eg what’s the difference ‘’GenericViewSet” and “ModelViewSet”. Are they same but serve different responsibilities under the hood? If so, what other extra concepts do I need to come to terms with? Does it make my job easier to design endpoint resources than I did with Flask/Falcon or not? What major benefit do I gain in learning extra concepts vs writing simpler and easier code I do in Flask/Falcon? More importantly, will they be easier or harder to change should the major framework developers decide to alter your understanding of these concepts in the future? In all front, I would argue that I don’t gain any benefit in making code design simpler using these tools.

It just made all the things harder.

While it’s harsh for me to say this, I have to be fair to them. To their credit, the primary initial benefit of having major frameworks is that they help to save you ahead of time when building your code. They introduced shortcuts toolchains to assist you in reducing boilerplate code so you never have to find yourself writing lines of code that are repetitive all over the place. They do this by introducing these ‘’black magic” such as class-based viewsets that gets to write DRYer lines of code and you’re well on your way in getting the product shipped out sooner than you realised. While that’s an attractive selling point in helping you out to achieve deadlines quicker in beginning, but in the long run it will not be sustainable.

Sooner rather than later, often, you will find yourself shooting yourself in the foot for paying the price when requirements change over time and the initial assumptions of code design not longer stay constant. When they changed, those fewer lines of code you will have to change. How much is the change you may ask? More important question how big the risk of change will impact the rest of the data flow in the system?. Not only that what about unit testing/integration? Now the DRY/abstraction is no longer serving, will the rest of the application be prone to breaking further? Not so straight-forward. Those “black magic” will come back to haunt you. 👻

I would say that using major frameworks that supposed to save you heaps of time is more of a false economy than anything if you ask me - eventually.

With major frameworks, it’s infamously said they give you conventions over configuration. When reading that, it means to me, as a developer, you are not required to care too much of configuration what makes the bells and whistle of the framework. All they want to teach you is to embrace their conventions and trust that they all do a good job and do the heavy lifting for you. You never have to worry or be concerned about their design decisions. Those same design decisions will come with a set of opinions people made for the community thus you will either have to embrace them truthfully or suffer the consequences should things don’t work out in your favour.

Either way, what reveals is that major frameworks like Django (arguably) forces you to be influenced by their design philosophy around these and stick to them to the teeth while Falcon/Flask are the complete opposite. For that reason, they’re making you feel real ‘’lazy” to write any good code thus you will never appreciate why writing good and simple code truly matters when designing your architecture in the direction you want.

All in all, I just want to say I’m not giving major frameworks out there a bad name.

These are just my opinions, and my opinions only.

I come from a different school of thought when building high-end solutions architecture of the application in various team sizes where I’m fortunate to see good simple and clean code architecture design is laid out. With any frameworks, they come with pros and cons of design. Django helps to saves you time upfront by eliminating boilerplate designs as a pro; but falls short when if you want to scale design differently compared to its built-in conventions later on. While Flask/Falcon may not have all the fancy ‘black magic’ toolsets Django comes, but the major pro for me is that the learning curve behind these are much simpler to work and encourages you to care deeply of design thinking while still keeping good clean and simple-to-understand code as the main goal.

I always firmly believe that every problem domain we aim to solve out there needs the right tool for the right job. If you think Django/RAILS/Laravel or similar helps to solve 80-90% of the problems and you don’t mind dealing with the conventions it comes with, then thats the correct tool of choice you should make. But if the same framework only helps to achieve 50% or under of the problem and their conventions doesn’t serve you any useful purpose at all, then Flask/Falcon or similar will be your guy to handle these.

Just remember that every framework designs comes with certain tradeoffs to build a good scalable application.

For me, I would go for simple clean code architecture. It will trump all those ‘black magic’ anytime any day.

That’s my two cents.

Till next time, Happy Coding!

Disclaimer: I’m not framework expert by any chance. I’m just making a high-level comparison between these and drawing my ow conclusions on how they are good or not so good for anything when building good architectural apps. - with a bit of dash of personal bias here ;)

]]>